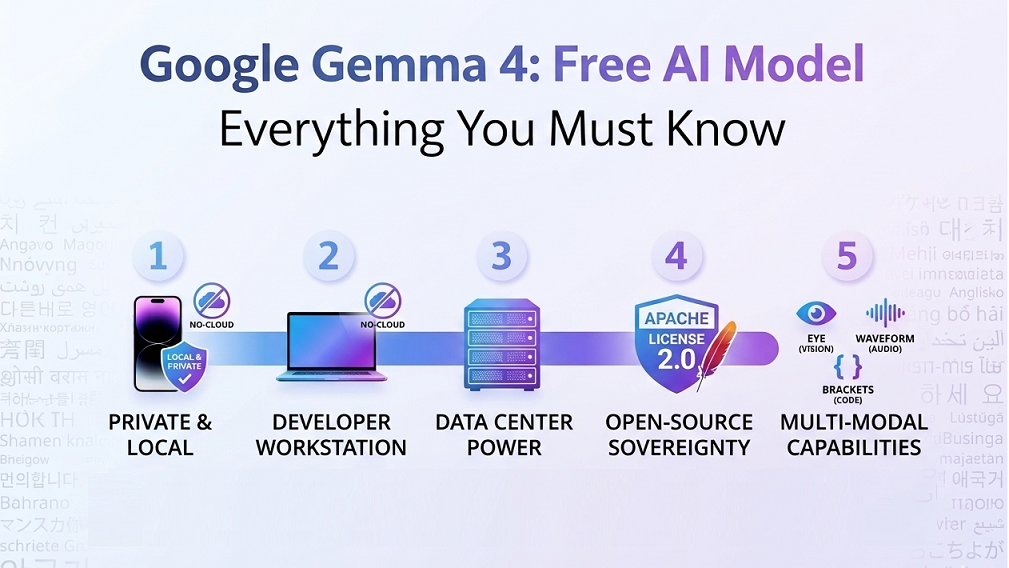

What if you could run a powerful AI model directly on your phone, your laptop, or your own server — completely free, with no subscriptions, no cloud dependency, and no data leaving your device? That’s exactly what Google just made possible. On April 2, 2026, Google DeepMind officially launched Gemma 4, its most capable open-source AI model family to date. Whether you’re a developer, a researcher, a student, or simply someone curious about AI, this launch is one of the most significant technology events of 2026 — and here’s everything you need to know about it.

What Exactly Is Google Gemma 4?

Gemma 4 is Google’s latest family of open-weight AI models, built using the same research and technology that powers Gemini 3 — Google’s flagship proprietary AI. The key difference? Gemma 4 is free, open-source, and can be downloaded and run on your own hardware. It comes in four sizes to suit different needs:

- Effective 2B (E2B) — Designed for smartphones and ultra-low-memory devices, runs in under 1.5GB of RAM.

- Effective 4B (E4B) — Ideal for mid-range phones and IoT devices, balancing speed and capability.

- 26B Mixture-of-Experts (MoE) — Built for speed on developer hardware, activating only 3.8B parameters during inference for ultra-fast responses.

- 31B Dense — The most powerful of the four, optimised for raw quality and fine-tuning on workstations and data centres.

What makes this launch particularly significant is the licence. For the first time in the Gemma series, Google has released these models under the Apache 2.0 licence — one of the most permissive open-source licences available. This means anyone can use, modify, and even commercialise Gemma 4 without restrictions. Google explicitly described this as a direct response to feedback from its developer community, positioning Gemma 4 as a tool that gives users complete control over their data, infrastructure, and models.

Let’s solve your ngo problem together

How Good Is Gemma 4 Compared to Other AI Models?

This is where Gemma 4 genuinely surprises. The performance numbers are hard to ignore:

- The 31B Dense model ranks #3 among all open AI models in the world on the Arena AI text leaderboard.

- The 26B MoE model ranks #6 on the same benchmark.

- Both models outperform AI models up to 20 times their own size — a remarkable achievement in AI efficiency.

- Google DeepMind CEO Demis Hassabis called them “the best open models in the world for their respective sizes.”

This level of performance at such a small parameter count is the result of what Google calls “intelligence-per-parameter” — squeezing frontier-level capability into a model small enough to run on hardware most people already own. You no longer need expensive cloud-based AI infrastructure to access world-class AI. A model running on a consumer GPU — or even a smartphone — can now deliver results that rival systems costing thousands of dollars a month to operate.

What Can Gemma 4 Actually Do?

Gemma 4 is not just a chatbot. It is a full multimodal, agentic AI model built for real-world tasks and complex workflows. Here is what it supports straight out of the box:

- Agentic workflows: Native function-calling and structured JSON output means Gemma 4 can autonomously plan and execute multi-step tasks — browsing data, formatting results, and calling external APIs — all without constant human input.

- Vision, video & audio: All four models process images and video natively. The E2B and E4B edge models additionally support audio input, enabling real-time speech recognition and translation with no internet connection required.

- Code generation: Gemma 4 can write, complete, and fix code entirely offline, turning any laptop or workstation into a private, local-first AI coding assistant.

- 140+ languages: Natively trained on over 140 languages, making it one of the most multilingual open models ever released — a major advantage for anyone building for global or regional audiences.

- Long context windows: Edge models support up to 128,000 tokens and larger models up to 256,000, meaning you can feed entire documents, codebases, or datasets into a single prompt.

Beyond these headline features, Gemma 4 also introduces native system prompt support, configurable thinking modes for advanced reasoning, and a hybrid attention mechanism that delivers the speed of a lightweight model without sacrificing deep contextual understanding. It is, in every sense, built for production — not just experimentation.

Why Does the Apache 2.0 Licence Matter So Much?

Licensing might sound like a legal technicality, but for the AI community it is everything. Previous open AI models often came with significant usage restrictions. Apache 2.0 changes all of that. Here is why it is such a big deal:

- Commercial freedom: Anyone can build and sell products powered by Gemma 4 without paying Google a single rupee or dollar.

- No attribution requirements: Unlike some open licences, Apache 2.0 does not force you to credit Google in your product.

- Full modification rights: You can fine-tune, retrain, or completely reshape the model for your specific use case.

- On-premises deployment: Deploy Gemma 4 entirely on your own servers, with no data ever passing through Google’s infrastructure.

- Digital sovereignty: Organisations in regulated industries — healthcare, finance, legal — can now run advanced AI inside their own controlled environments.

This makes Gemma 4 fundamentally different from most AI tools available today. For organisations concerned about data privacy, competitive secrecy, or regulatory compliance, this is a transformative shift. Your data stays on your device, your model runs on your hardware, and no third party has any visibility into what you are building or doing with it.

How Do You Get Started with Gemma 4?

Getting started with Gemma 4 is remarkably straightforward, regardless of your technical level. Here is where and how you can access it:

- Google AI Studio — Experiment instantly with the 31B and 26B MoE models directly in your browser, no setup required.

- Google AI Edge Gallery — Available on Android and iOS, lets you run the E2B and E4B models directly on your phone.

- Hugging Face, Kaggle & Ollama — Download the model weights for free and run them locally on your own hardware.

- LM Studio & llama.cpp — Beginner-friendly desktop tools that make running Gemma 4 locally as simple as installing an app.

- Vertex AI on Google Cloud — For teams that need to scale to production with enterprise compliance and security guarantees.

For deeper customisation, Python bindings for LiteRT-LM allow advanced users to build fully tailored on-device AI pipelines. The CLI tool works on Linux, macOS, and even Raspberry Pi — making Gemma 4 one of the most accessible AI models ever released to the general public. Whether you want to experiment in five minutes or build a production system, there is a path for you.

Who Can Benefit Most from Google Gemma 4?

- Developers & Programmers

- Build AI apps for free. Apache 2.0 licence means no API fees, no usage limits, and full commercial rights to anything you build.

- Code offline privately. Gemma 4 can write, complete, and debug code entirely on your local machine with no internet needed.

- Content Creators & Marketers

- Create multilingual content at zero cost. With 140+ language support, producing global content no longer needs a paid AI subscription.

- Automate content tasks privately. Gemma 4 can draft, research, and format content autonomously — all on your own device.

- Students & Researchers

- Access world-class AI for free. Available on Hugging Face and Kaggle — platforms students already use daily, at zero cost.

- Work with sensitive data safely. Run experiments entirely on your own machine with no data passing through external servers.

- Small Business Owners

- Replace expensive AI subscriptions. Gemma 4 delivers comparable results to paid tools — running free on hardware you already own.

- Get started without technical skills. Tools like Ollama and LM Studio make setup simple enough for non-technical users.

Final Thoughts

Gemma 4 is not just another AI model release — it is a genuine turning point in how AI is accessed, owned, and deployed. With benchmark-topping performance, a fully open licence, support for 140+ languages, and the ability to run on a smartphone offline, it lowers the barrier to powerful AI more than any previous open model. The biggest AI launch of 2026 is here, it’s free, and anyone can use it starting today.

Bigpage can help you to grow your business digitally through well-defined website content, building an NGO Website, Dynamic website for you to enhance your online presence and get more clients through various Facebook, Instagram Ads

Agrodut is a managing director and seasoned digital marketing specialist passionate about SEO. With 15 years of experience, he has helped numerous businesses elevate their online presence and achieve higher search engine rankings. A results-driven professional, Agrodut Mondal stays updated with the latest SEO trends and best practices to ensure cutting-edge strategies. When not optimising websites, he enjoys exploring new technology and sharing insights through writing and speaking engagements. Connect with Agrodut for expert guidance on unlocking your website’s full potential in the digital.